Why you can't vibe-code your way to a better production agent

Claude Code with max thinking is excellent. It's also not the right tool for what you're trying to do.

It's Friday night. Your AI agent has been live in production for three weeks. You pull thirty trajectories where the agent's behaviour diverged from what you'd expect. You drop them into Claude Code with max thinking, attach the relevant skill, and ask for a fix.

What comes back is genuinely impressive. The model walks through each failure. Identifies a pattern: the skill assumes a particular context field is always populated, and in a specific class of cases it isn't. It proposes a defensive check, a fallback prompt path, and a small refactor of the rubric. You read through the reasoning. It is sound. You apply the diff, run it back against the thirty cases, they all pass. You ship.

Monday morning, four cases that worked last week now fail. Tuesday, a customer complaint surfaces a regression you can't attribute to anything specific. Three weeks later, a similar pattern recurs, and nobody remembers what was tried last month. Six weeks later, a model upgrade ships and your skill quietly stops doing what it used to. Six months later, an auditor asks why the agent handles this category of case this way, and nobody can produce a coherent answer.

This post is about why that loop breaks — and why the break is not Claude Code's fault.

Where Claude Code is excellent

Frontier models with extended thinking are genuinely the right primitive for a class of tasks. Drafting a candidate skill from a written spec. Critiquing a prompt against a stated objective. Reading a single trace and explaining what went wrong. Proposing the diff. We use Claude Code internally, every day, for exactly these tasks — they are the parts of agent work that are point-in-time, well-scoped, and benefit from a smart reader with full session context.

Where the tool stops is anywhere the work extends beyond a single session.

What's outside the session

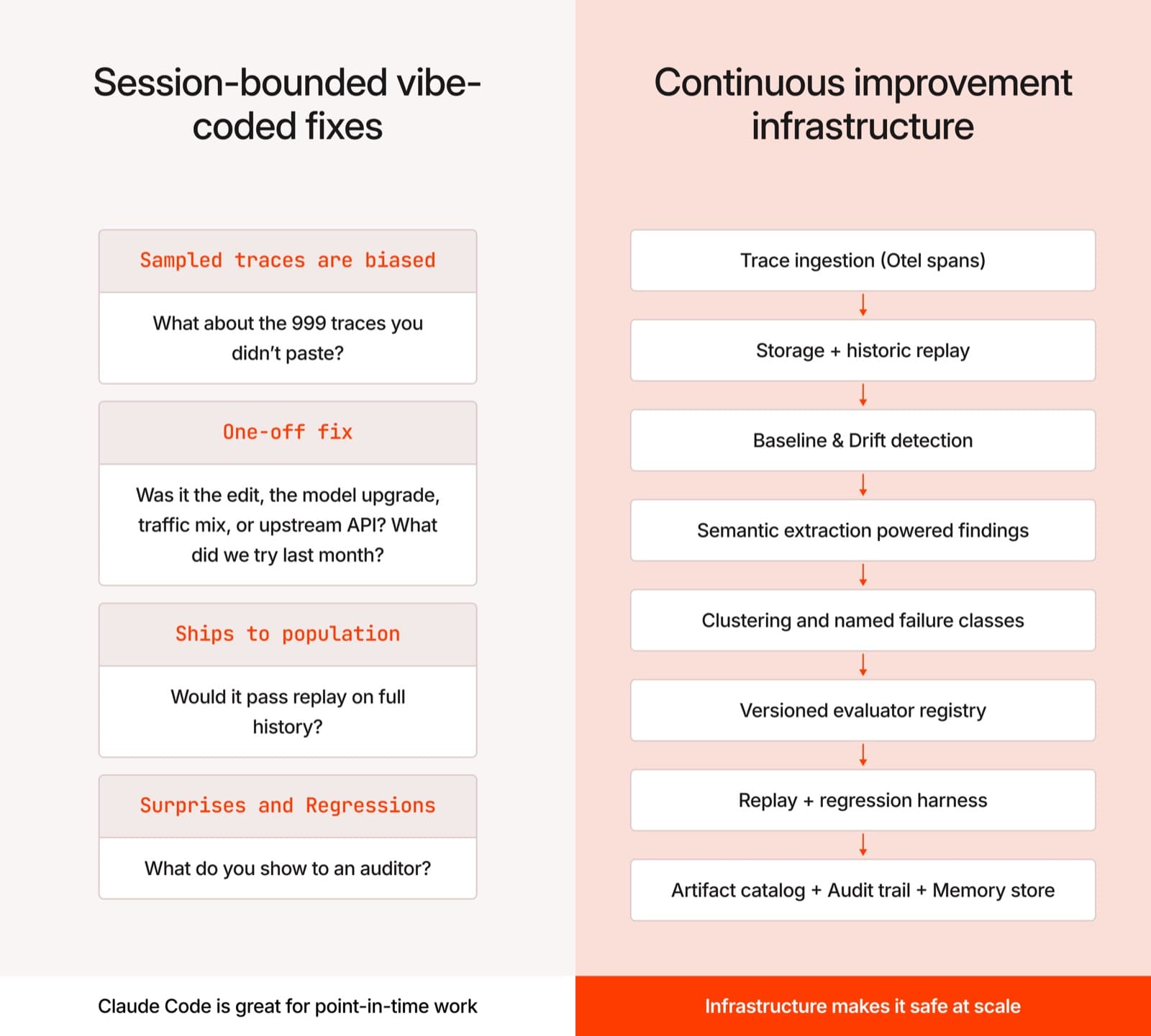

A production AI agent is not a piece of code being debugged. It is a distribution of behaviour, varying with time, across a population of trajectories. Improving it requires answers to questions that no session-bounded tool can answer.

“Of the 999 trajectories you didn't paste into the chat, how many were already passing? How many have started failing in the last seven days? How many are about to enter a new failure class that has no name yet?”

A vibe-coding session sees only what you handed it. The thirty traces in your clipboard are a sample, not a population — and they are a biased sample, because you noticed them by hand. Whatever pattern you fix is fit to that sample. Whatever fix you ship runs across the whole population, including parts of it that nobody has examined. The patch lands cleanly on the failures you saw and unevenly on the ones you didn't.

“When a key metric drops next week, was it the skill edit you just shipped, or the model upgrade that landed yesterday, or the traffic mix shifting in ways nobody flagged, or the upstream API quietly returning a new error code?”

Attribution is a longitudinal operation. It requires a baseline of behaviour from before the change, a model of the change itself, and a comparison across time. None of that lives inside a chat session. The model in front of you cannot see what your agent was doing in week three; it can only see what you tell it now. Without a baseline, attribution collapses to “something is different,” which is not actionable.

“What was tried last month? What worked? What was rejected, and why?”

A vibe-coding session has no memory of any prior session. Every interaction starts from zero. Knowledge that should compound — we tried a similar defensive check in March, and it caused a regression on a different category of cases that nobody re-tested — sits in nobody's head, unless someone wrote it down. It usually doesn't get written down. The same fix gets re-derived every quarter; the same regression gets re-discovered every quarter.

“The change you just made — would it pass a regression test against the full traffic history?”

Running a candidate change against months of historical traffic, scoring it with a versioned set of evaluators, comparing the score distribution to the prior, blocking promotion if previously-passing cases now fail — this is a procedure with measurable steps. It is not a prompt. It is infrastructure.

“Six months from now, when an auditor or a regulator asks why the agent does what it does — what do you point to?”

A diff is not evidence. A trial record is. A versioned artifact catalogue is. A plain-language record of what was learned, when, on what data — that is what survives a compliance review. A chat transcript does not.

What it would take to make this work with vibes alone

If you tried to build the answer-surface for those questions out of weekend hacking, here is the rough build list:

- A trace ingestion layer that captures every agent run, OTel-compatible, with structured spans

- Storage that supports both real-time queries and historical replay across months of traffic

- A baseline model for each measurable property of the agent's behaviour, updated continuously

- Drift detection across multiple dimensions, with attribution to source

- A semantic extraction layer that turns raw traces into structured findings

- A clustering layer that groups findings into named failure classes, weighted by population

- An evaluator registry where rubrics are versioned and re-runnable across history

- A replay engine that scores candidate changes against the full traffic record

- A regression-detection harness that compares score distributions across versions

- An artifact lifecycle layer with provenance, versioning, and reversibility

- A plain-language memory store that captures every validated learning

- A backfill mechanism so new evaluators can re-score the past

- An audit trail that ties every behavioural change to its evidence chain

That is not a weekend. It is a quarter, minimum, of full-time engineering by people whose full attention is not on improving your agent. By the time you have shipped a working version of any one of these layers, the agent has drifted further, the model has been upgraded, and the eval set you started with is stale. You are now a build-your-own-agent-infra company instead of the company you were trying to be.

The right relationship

Claude Code is excellent inside the right scope. So is psql. So is git. None of them is a production system on its own. You query a production database with psql; you do not run a production database fleet on psql. You ship code with git; you do not ship production software with git alone. You run that fleet on observability, replication, slow-query analysis, and alerting alongside psql. You ship production software with CI, CD, environment management, and rollback infrastructure alongside git.

The interactive tool is correct for what it does. The infrastructure is what makes it safe at scale.

Claude Code with max thinking belongs inside the same kind of relationship. It is the right primitive for the point-in-time work — formulating a hypothesis, drafting a fix, critiquing a single trace. It belongs inside an infrastructure that handles measurement, attribution, regression, memory, and audit. We use frontier models all the way through that infrastructure. What we add is everything around them.

For the broader picture of what that infrastructure looks like and how it operates as a closed loop, see our companion post: Production AI agents need research infrastructure, not iteration.

You can vibe-code a fix. You cannot vibe-code an improvement program.

If you are running an AI agent in production and the Monday-morning surprise has happened to you more than once, let's talk.